The Changing Science of Memory

Tedd Roberts

The old joke says "The first sign of old age is losing your memory; unfortunately, I can't remember the second sign..." While many folk might argue which afflictions are the most inevitable when it comes to aging, the fact that memory is affected is not in question.

Society used to call this "senility" and it was an accepted fact that the older a person, the more memory was affected. Senility was most frequently seen in people aged in their late 70s through their 90s (when they lived that long). However, awareness in the medical fields was growing that effects of age on memory were not uniform—there were conditions of "pre-senile dementia" in which signs of senility would show up in persons in their 50s and 60s—well before any of the normal signs of memory loss and brain impairment should have occurred.

Part of the problem in the diagnosis was that "senility," "dementia" and memory loss were not synonymous. Senility or "senescence" are just terms for wearing out or breaking down with age. Cars, houses, appliances that are used well past their intended design life show senescence and eventual break down past repair. Dementia refers to abnormalities in the normal function of the brain; this can be memory, of course, but also includes personality changes, hallucinations, inability to make appropriate decisions, and other mental aberrations. Thus loss of memory can be part of senility and dementia, but not necessarily the whole story.

In the late 1960s and 1970s a new diagnosis became prominent: Alzheimer's disease. It became (and remains) trendy for any memory disruption or early age dementia to be called Alzheimer's (or AD) despite the fact that the clinical diagnosis requires much more than forgetting a few names or dates! My first encounter with this field of research (memory) in which I would spend nearly all of my adult life, occurred in 1979: I was visiting my parent's home at a time when my grandparents were in residence. My Grandmother had experienced something that altered her personality and behavior. Family members latched onto the suggestion that it was AD (despite lack of medical evaluation). I, a naïve pre-med student, tried to argue that the key criterion for AD was that the dementia be pre-senile (at the time, meaning well before age 70). My grandmother was about 70 years old at the time, and I argued that classically described senile dementia was not necessarily out of the question.

I was later proved right, then wrong, then right again.

Part I: Alzheimer's Obsession

In 1901, German psychiatrist and neuropathologist Aloysius Alzheimer described a case of "pre-senile dementia" in a 51-year old patient. The key diagnosis was failure in short-term memory; i.e. temporary memories such as remembering a phone number long enough to make a call, or memories for new information that has not been previous encountered nor committed to "stored" memory.

For the next 5 years, Alzheimer studied his patient, and after death, used Franz Nissl's unique method of silver-staining brain tissue to find out what had happened. The brain tissue of Alzheimer's key patient had abnormal formations (call plaques and tangles) in much of the brain, most notably the hippocampus and temporal lobe as well as pre-frontal areas. Alzheimer's diagnosis was written into a German medical textbook by friend and colleague Emil Kraepelin, who named it "Alzheimer's Disease."

The 1950s saw a surge in the neurosciences in the U.S. and around the world. Researchers began looking in earnest at the association between the "plaques and tangles" and neurological diseases. The plaques were identified as deposits of amyloid protein, which results when normal proteins are incorrectly synthesized. Tangles occur when "neurotubules"—long filaments of protein forming internal structure of neurons—likewise become abnormally formed and clump into insoluble aggregates. Armed with better tools for detecting the pathology of AD, the diagnosis still remained one that could only be confirmed by careful examination of the brain after death.

The most prominent diagnostic symptom in live patients was an early onset of the types of memory loss normally seen in patients 20-30 years older. Thus, my semi-informed opinion, that my grandmother's condition was not AD was still supported for a few more years.

H.M.

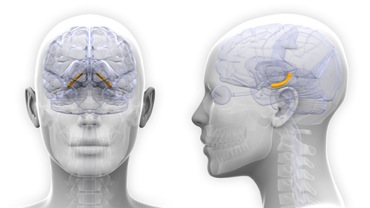

Still, the 50s gave the field of neuroscience several key pieces of information regarding memory and how it works. In 1957, Henry Molaison underwent surgery in hopes of stopping his epileptic seizures. Medication would not work, and doctors had determined via EEG that the seizures started in the vicinity of the hippocampus, a structure with mostly unknown (at the time) function in the temporal lobes. The surgery would remove most of the hippocampus on both sides, along with many adjacent areas. The operation successfully stopped the seizures, but it left the patient, known in medical and science texts for the next 50 years simply as "H.M." with a profound amnesia. Most importantly for the diagnosis of AD and other memory disorders, the type of amnesia exhibited by H.M. was "anterograde amnesia" a failure of short-term memory in which the patient is unable to retain temporary information for more than a few minutes, and in turn, unable to turn that information into permanent memories. The fact that H.M.'s amnesia was so similar to the diagnosis by Alzheimer, led scientists to re-examine the pathology of AD and carefully consider the role of the hippocampus in memory. The AD-related plaques and tangles did indeed appear in the hippocampus, and further experimentation in animals confirmed that an intact hippocampus was essential for making and holding short-term memories as well as converting them into long-term memories.

The hippocampus (yellow) is located inside the temporal lobes on either side of the brain. ©2015, decade 3d-anatomy online, image licensed from Shutterstock.

Still, the diagnosis and pathology of AD was continuing to develop. Psychiatrists and neurologists had suspected that the personality changes might be caused by damage to the anterior (forward) portions of the brain. The presence of plaques and tangles in the pre-frontal cortex was supporting evidence that "dementia" was as important (or more so) in diagnosing AD.

Unfortunate Luck

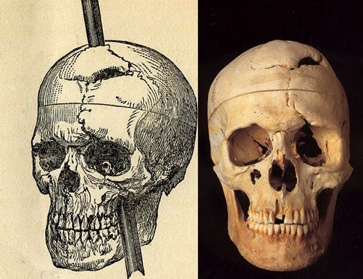

To understand the link between personality and the frontal lobe of the brain requires that we examine the case of Phineas Gage. In 1848, Gage was twenty-five years old and a railroad foreman, responsible for checking the blasting charges used to cut holes in rock to clear the way for railroad construction. Whether it was distraction or sheer coincidence, the normally meticulous foreman suffered an accidental explosion while he was tamping a charge (to ensure that the blasting powder was uniform and tightly packed in the hole). The tamping rod was over three feet long, thirteen pounds in weight, and 1.25 inches in diameter at its widest—tapering to a point at its upper end. The explosion drove the rod through Gage's skull, entering the left cheek, passed behind the left eye and exited through the top of the head just behind the hairline. Miraculously, Gage lived. The doctor who treated Gage was amazed that he was awake, walking and talking after the incident.

The skull of Phineas Gage on display in the Warren Anatomical Museum, Harvard Medical School. Photo courtesy of Harvard University.

I first encountered the story of Gage in graduate school, where we learned that after the accident the hard-working, responsible young man had become rough and vulgar, bordering on the sociopathic. Psychiatrists surmised that the personality change was related to the frontal lobe damage. They would prove to be correct in assigning this function, even though the facts of the case were not. Sam Kean's 2014 book The Dueling Neurosurgeons reveals that there is much about Gage's story that has been distorted, or that was never taught in neuroscience or psychology classrooms. The personality changes were likely transitory, and the brain damage was never quite the same as in the legend.

Still, the case led neuroscientists to study the function of the frontal lobes, and those studies greatly contributed to our understanding. The frontal lobes are important in planning and controlling movements, but more importantly, planning all decisions that we make. Commonly called "executive function," the front of the brain is involved in many of the functions that contribute to personality, behavior, morality, risk assessment and judgment. This brings us back to the brain areas damaged in Alzheimer's disease. Besides the hippocampus and temporal lobe, AD plaques and tangles are found in the ventral (lower) frontal and pre-frontal areas as well, and may account for the personality changes seen with AD.

But at least AD leaves the oldest memories, skills and sensory processing intact, right? After all, the diagnosis involves short-term memory, and the plaques are less pronounced in the parietal lobe (sensory inputs and long-term memory), occipital lobe (vision) and deep brain structures.

Not exactly; advanced AD is accompanied by a loss of nearly all memory, loss of coordination, auditory and visual hallucinations, and the eventual loss of automatic body functions. Even brain areas with few signs of AD require input and output connections with the rest of the brain. Advanced AD shrinks brain mass and increases the fluid-filled spaces in the brain, eventually compromising all brain and body functions. Eventually the loss of memory extends to both short-term and long-term memories and skills. Memories and skills are stored in the connections between neurons (more on that later) and as brain mass is lost, so is overall brain function. It is fairly common with patients in advanced AD that they essentially regress to a state where they have little to no awareness of the world and have lost memories of loved ones, and even themselves. AD (as with many related memory disorders) is a sad syndrome, since it robs humans of their essential self. It affected my own family again quite recently, and I am sure most of you reading this article can say the same.

June is Alzheimer's disease Awareness Month, and researchers are definitely aware of our changing understanding of the disease. Eventually we would come to realize that many of the processes of AD occur at various ages—in fact, any form of senility was a result of degenerative processes that were not normal aging. Yes, normal aging causes some decline, but the sudden changes associated with AD were not 'normal' in any sense of the word.

Part II: Changing Science

The above story of the discovery and understanding of Alzheimer's disease highlights how neuroscience and our understanding of memory have changed over the years. Memory loss was a normal part of aging, then AD showed that memory loss could occur at an earlier age. Moreover, further study suggested that even age-related dementia was related to AD, and was not a necessary condition of aging. AD involved only short-term memory, but then was discovered to affect many overlapping brain functions. Likewise, our understanding of how memory is formed and stored has undergone many changes—and sometimes, it changes right out from under us.

Early neuroscience postulated the "engram" as a lasting manifestation of an experience. Psychologist Karl Lashley wrote In Search of the Engram in 1951 chronicling his research into finding the single site in the brain where memories were stored. By the time of his death in 1958, he had disproved the "single site" hypothesis for the engram, and had concluded that memories must be distributed around the brain (but still as identifiable engrams). His theories of "mass action" suggested that both the rate of, and capacity for learning were limited by the amount of cortex (the "surface" of the brain consisting of layers of brain cells—neurons). Thus, as people aged and "filled up their cortex," the ability to learn and remember decreased. At the same time, Lashley believed that within a particular area of the brain, there was "equipotentiality," i.e., any part of brain could take over the function for any other part of brain. As always, the ideas were both right and wrong - not all brain areas are "equipotent" but we now know that there is incredible "plasticity" in the brain that allows recovery after damage. We have also learned that memory is very likely distributed across many areas of the brain, but the "cortical limits" that Lashley theorized are not quite as stringent as he thought. Still, the concept of the engram as a lasting signal or code for a memory has a lot of value.

Early theories of memory considered it to be a physical "trace" left behind after an experience, much like a picture. ©2015, Mariia Masich, image licensed from Shutterstock.

From Flatworms to Fiction

Since the early twentieth century, neuroscientists had a pretty good clue of the relationship between the actions of specific cells in the brain and the information that we perceive. Lashley, himself, was instrumental in identifying vision-processing areas of the occipital cortex. Vernon Mountcastle identified "cortical columns" in the late 1950s and 60s that appeared to organize the cortex of the brain into discrete processing circuits. David Hubel and Thorsten Wiesel, in the 1960s, showed that such columns in the visual cortex of the occipital lobe represented discrete components of vision. Thus, memory researchers continued to search for discrete representations of memories in the brain, but a series of experiments in the same timeframe suggested a different story.

Biologist James McConnell found that planarians (flatworms) could be trained to navigate very simple mazes, and avoid regions where they encountered an aversive stimulus (usually electric shock, but McConnell reported that a stinging / irritating chemical would also work). Once the flatworms had learned the maze, he would grind them up and mix the substance with food for other flatworms. Those planaria then showed evidence of the memory for the maze and avoided the regions where their predecessors had encountered aversive stimuli. McConnell postulated that memories were stored as molecules that the flatworms ingested from the remains of the trained worms. Given the recent discovery of chemical genetic coding, it seemed that the most likely candidate for a memory molecule was Ribonucleic Acid, RNA—the chemical which formed the copy of the genetic instructions encoded in Deoxyribonucleic Acid, DNA.

. . . and thus a science fiction meme was born.

Larry Niven made extensive use of the concept in his novel A World Out of Time. In the novel, persons who had their bodies frozen to escape death, or in hopes of miracle cures, found themselves awake hundreds of years later in different bodies. Science had never found a way to repair the damage caused by freezing, but a person's memories and personality could be saved by essentially "grinding up the corpsicle" (sic) and transferring those memories to a body that had been wiped of its own memories. Similar scenes played themselves out from graphic novels (Swamp Thing) to TV (Star Trek: The Next Generation).

Unfortunately, by the early 1970s, the planaria experiments were discredited. No one could reproduce the studies, and even McConnell himself admitted that the overall concept of transferring memories via molecules was not the explanation for his earlier results. [Notably, McConnell went on to gain notoriety publishing satirical articles aimed at both himself and fellow scientists, leading people to wonder if he had ever observed the results reported in those early studies, or if it had been an elaborate joke.] Thus, the idea of a memory as molecules would just have to remain as fiction . . . for now.

Hebb's Theory

Donald O. Hebb was a student of Karl Lashley in the 1930s and 40s. He had extensively studied the evidence from Dr. Hans Berger (1929) that the brain showed continuous electrical activity no matter what activity it was engaged in. Thirty years earlier, Ivan Pavlov had postulated that the brain was only active in response to a stimulus or when trained to expect a stimulus (hence his famous experiments in what would come to be known as "Pavlovian conditioning"). Berger, and then Hebb, noted that there was always electrical activity in the brain, and not just when there was an event to stimulate it. Hebb went on to study this electrical activity in depth, and theorized that neurons could change their firing if the connections between them were changed. Furthermore, there was a precise circumstance which could cause a connection to be strengthened between neurons: i.e. if an output was active at the same time a new input arrived, the connection from input to output would be strengthened, making any signal through that connection "potentiated," i.e. faster, stronger, and more precise. This became known as Hebb's Postulate, but it would take another seventeen years (from publication in 1949 to experiments on long-term potentiation in 1966) before this effect would be demonstrated.

The famous neuroscientist Santiago Ramon y Cajal gave a lecture in 1894 in which he contradicted current thinking about the brain and memory. Instead of requiring new neurons to hold new memories, he said, memories might instead use existing neurons, but strengthen the connections between them. Fifty-five years later, Hebb's theory suggested the means, and then seventeen years later, researchers saw the first experimental evidence of strengthening neural connections in living brain tissue. In 1966, Terje Lømo was a graduate student in the lab of Per Andersen in Oslo, Norway. He was studying the activity of neurons in rabbit hippocampus to electrical stimulations delivered "presynaptically," that is, to the "upstream" neural connections (synapses) onto those neurons. A single electrical pulse to the inputs caused a corresponding natural electrical response of the neurons. Incidentally, Lomo discovered that if he delivered very fast trains of repetitive electrical stimulation (100 per second, for about one second), waited a minute, then delivered single electrical pulses, the response of the neurons had increased. This "long term potentiation," or LTP, would last for minutes to hours, and became the first demonstration of the type of synaptic modification Ramon y Cajal and Hebb felt would underlie new memory storage.

In the subsequent forty-nine years, we learned a lot about LTP—in the first place, Hebb was correct: the repetitive stimulus caused neurons to still be active when the next input arrived, producing a cascade of intracellular events including gene activation and protein synthesis that resulted in stronger synaptic connections between the input and the target neuron. We have also learned much more about the conditions required for changing synaptic connections—including strengthening and weakening those connections, and that the input requirements are more about timing than repeating stimuli. Whether LTP is the exact mechanism of memory formation is still in debate, but it did provide several clues about memory being a phenomenon of discrete electrical signals in the brain.

The Age of Cyber

One of the challenges of the sciences has always been how to explain scientific principles to the public. The easiest way is by analogy, and it was not long before science communicators latched onto the idea that since the brain—and memory—operates on the basis of electrical signals and active (or inactive) neurons, then it must operate like a computer. Again, Science Fiction took off with new speculation of brain-to-computer interfaces, soon followed by total digital simulation environment that presaged the first steps into virtual reality. Many readers will likely point to Bruce Sterling and the stories which came to define the cyperpunk genre, but my first notice of the blending of "cyber" and SF was in several books by James Hogan: The Genesis Machine included descriptions of brain-to-machine interfaces, while Realtime Interrupt featured complete virtual reality environments. Not surprising, really, since Hogan worked in the computer industry at the time.

Human neuroscience infiltrated the computer age at the same time the computer age infiltrated neuroscience. Electronic information storage devices were commonly called "memory" while advanced analysis of brain functions utilized "information theory" and computed the number of bits that could be encoded by assemblies of neurons. As both sciences progressed, the neuroscientists started looking for patterns and structures that would serve as memory storage, while the computer scientists used the parallel connections between neurons as a template for computational system based on "networks of neuron-like processing nodes" or neural networks.

Instead of being like digital computers, however, neural networks employ analog principles in the connections between processing nodes, much like the analog computers developed as bombsights, electrical power system controls and Enrico Fermi's FERMIAC computer for physics calculations. A shortcoming of analog computers is that it is difficult to repeatedly obtain the exact same results, unlike digital computers. However, as evidenced by their use in gun, torpedo and artillery fire-direction computers, analog systems could compute a "best guess" solution much more rapidly and reliably than early digital computers. As neural network studies increased in the 1970s through 90s, neuroscientists realized that the "best guess" was very good indeed, and the systems mimicked many of the same techniques used by the brain to turn the information from sensory inputs into behaviors.

As neural network simulations turned to the process of memory, several exciting findings suggested that the scientists were on the right track for simulating human memory: they can perform pattern completion, and link separate items in a temporal sequence. A key principle in neural network models is that network consists of dozens to hundreds of processing elements connected and functioning in parallel. Thus, instead of remembering one small piece of data, the network gets presented with a large chunk of data at once, then rules such as Hebb's Theory are applied to strengthen the connections between the processing elements, resulting in a desired output. Once complete, any time that input was applied, the same result would occur; however, it was soon discovered that the complete input need not be present each time. In fact, up to two-thirds of the input could be missing, yet the neural network would reliably produce the exact same output. When re-imagined as memory storage, scientists would set the network input to mimic the pixels of a photograph, and have the output reproduce the pixels of that same image. Partial and incomplete inputs still resulted in the whole picture as an output. This, thought scientists, explained the ability of human memory to recall complex information with just small prompts or incomplete recollections.

Yet another feature of neural networks might also explain this ability to perform "pattern completion" similar to human memory. If a small portion of the neural network output is redirected back to the input, then the inputs could be grouped in a series or sequence. Part of each sequential stored pattern was also linkage to the previous and next pattern that had been stored. Inputting only the first cue would allow the entire "associative memory" to be retrieved in the sequence that it had been stored.

In Search of the Engram. Again.

With these findings, memory had been solved! Right?

Not entirely, but neuroscientists had a clue what to look for and where to look in the brain for memory patterns. Unfortunately, despite the ever-increasing precision with which modern science explores the brain, researchers still have not identified the location of "memory banks" holding discrete memories. There was strong evidence for the importance of the hippocampus and nearby structures for the storage of memory, but the evidence from H.M. and other cases showed that later damage to the hippocampus did not erase the memories that were already stored. Many pieces of information started coming together, though. Memories potentially can be created by changing the strength of connections between the individual cells, or neurons, in the brain. There are billions of neurons, and trillions of connections in the brain, such that a lifetime of memories could be encoded without running out of storage. Localized brain damage to a small area of the brain might not affect already-stored memories, but widespread damage could. Was it possible, then, that memory was distributed all throughout the brain?

An example of a holographic pattern - elements of a single image distributed and repeated with sufficient detail that the whole (three-dimensional) image can be reconstructed from only portion of the stored data. ©2015, Perfect Vectors, image licensed from Shutterstock.

This theory of memory came to be thought of as a "holographic" theory of memory and the brain. In a sense, this is also a fractal theory, in the sense that information is repeated in ever-finer detail the more precisely we look at the connectivity of the brain. The reasoning behind this view is that scientists have come to understand that many of the processes in the brain are not simply analog, but nonlinear, chaotic and fractal. The hallmark of nonlinear systems is that the starting conditions are essential to the outcome of the process. Neuroscientists know that communication between neurons is never the same twice in a row because the release of chemical neurotransmitters depends on prior instances of release. Over all, the effects might be similar, but two identical stimuli result in different absolute counts of molecules released, electrical charges passed, etc. Yet out of this seeming chaos, coherent patterns do emerge, and they bear similar features to both fractals and holograms.

Thus we return to the search for the Engram. One of my most notable examples of misuse of the concept was a Star trek: The Next Generation where Dr. Crusher passionately declares: "The engram has wrapped itself around the cerebral cortex!" Nevertheless, and bad dialog aside, there is reliable evidence that experiences do leave a trace in the brain. As neuroscience increasingly delves into the molecular phenomena that accompany neural activity, we are finding that those traces may not necessarily be "wrapped around the cerebral cortex," but they include molecular and even genetic changes that persist long after the synaptic changes are accomplished.

Return of the Flatworm

In early 2014, a research paper (found here [LINK: (http://www.nature.com/neuro/journal/v17/n1/full/nn.3594.html)]) from Emory University threatened to change everything scientists thought they had figured out about memory. In their paper, Brian Dias and Kerry Ressler showed that when mice were trained to associate a particular smell with the sensation of a mild electrical shock to the foot (a mild tingling sensation—unpleasant, but not harmful) the memory associating the smell with fear could be inherited for at least two subsequent generations. There has been much examination and criticism of the study, but here was an experiment that appeared to prove the forty-year old flatworm experiments! Instead of RNA as the carrier molecule, scientists now speculated that the inherited memory resulted from "epigenetic modification," that is, molecules inside the cells altered DNA, or at least altered the molecules responsible for reading and translating DNA during growth and development.

Many issues remain with the study, epigenetic mechanisms typically involve molecules and DNA that are not contained in the nucleus of cells, but are passed directly from mother to offspring via their eggs. The fear memory in this experiment was passed from fathers to offspring; since ova contain a complete set of cellular components and sperm cells do not, the inheritance had to be a result of direct modification of DNA which was incorporated into the reproductive cells. Given that such "adaptive evolution" had been discredited since Darwin's writings in the nineteenth century, it was a difficult conclusion for scientists to accept.

Subsequent interpretations have focused on the fact that the inherited memory relied on changes in the olfactory system of the trained mice. Could the "memories" just be the result of differing sensitivity to the chemicals used in the original experiment? The authors say no, they controlled for that possibility, but other analyses disagree, including one which computes that the likelihood of a gene change in fully differentiated neural and olfactory cells being decoded and inserted into the non-differentiated reproductive cells was statistically improbable.

The current debate has renewed interest in the concept of how memories are acquired, whether they can be inherited, and whether other process could have affects that may be interpreted as memory, when they are not (such as the misnamed "muscle memory"). While these concepts have not yet invaded SF, they do tie in nicely with interest in the study of "flashbulb" memories and false memory.

Part III: I Remember When . . .

In general, memory is a weak process. Learning and remembering factual items requires repetition and retesting—something often found in military and adventure fiction regarding training for combat. In general, if you want to remember a phone number, you repeat it. Particularly if you can’t write it down – interrupt the repetition, and you forget the information. Skills and more complex facts require more repetition, and usually occur over days. The misnomer "muscle memory" is not really based in muscle, but is definitely memory that integrates sensory information from the body, particularly limb and muscle position. Repetition interspersed with sleep periods incorporating a state known as "REM sleep" (for the rapid eye movements that occur, associated with dreaming) is very important to shuttling memory from short-term temporary memory to long-term memory (this is called “Consolidation”).

Memories are frequently hard to record and easy to erase (at the very least, easy to lose track of). However, there is a subset of memory and learning that is permanently stored after only a brief or even single exposure. Events that lead to what we call "flashbulb memories" include very strong emotion and/or stress/danger. As a graduate student, my professors used to precede the lecture with "do you remember what you were doing when Kennedy was shot?" Granted, I was a bit young for that question, and very soon the examples became the Moon landing, Challenger explosion, 9/11, etc. or more positive memories such as marriage proposals, weddings or birth of a child. Flashbulb memories tend to be clear memories with a lot of associated sensory information–day/night, temperature, cooking smells, music on the radio. As stated, the emotions can be positive or negative, and the accuracy of these single instance memories is on par with "rehearsed" or repetitive event memories.

Erasing memory: research has shown that memory can be erased and even combined with new information every time a memory is recalled © 2015, Lightspring, image licensed from Shutterstock.

In a Flash

The accuracy of flashbulb memories in the absence of repetition occurs because the emotional feedback strengthens the inputs to the hippocampus from the brain's system for assessing reward or significance of a memory. Original theories suggested that single instance learning occurred due to survival mechanisms. For example: Touching fire causes pain—therefore the memory “Do Not Touch Fire!” does not need to be repeated and is stored as an exceptionally strong memory.

The space shuttle Challenger explosion in 1986 provided a unique opportunity for memory researchers to investigate flashbulb memories. It was an event widely watched by the public, it was an abruptly surprising event and it had an emotional impact. One of the first findings was that rather than being unique in how memory was stored, it actually involved the same principles of converging stimulation involved in Hebb's theory. When these memories occur in the presence of stress or danger, the reward/significance inputs are at their strongest. Recall of the memory or sharing experiences of where and when people viewed or learned of the Challenger explosion, also generated a recall of emotions and physical sensations from the event. (Later, researchers would determine that when such memories become pathological, i.e. associated with PTSD or drug abuse, replaying the memory involved experiencing all of the negative emotional sensation again.)

Another outcome of the research into flashbulb memories was finding that the memories do not remain perfect representations of an event. In fact, it is the personal confidence with which the memory is recalled that is more indicative of a flashbulb memory than its accuracy. Thus, a person is confident that they recall all events vividly (due to the emotional context) regardless of the accuracy of their recall. Given that researchers already knew that storing a memory via strengthening synaptic connections involves activating genes and protein synthesis in the cell (to actually form the stronger connection), it came as a surprise in the last few years to discover that the connections are also slightly weakened at the time of each recall or replay of the memory—prior to re-strengthening the memory. Thus, repetition strengthens memories, but also risks re-writing or contaminating the memory. Reliving a flashbulb memory also relives the emotion, and in the context of strong emotion, there is the chance that some portion of current experience will be written along with the original memory, contaminating the accuracy of recall. The stronger the emotion, the greater the danger that other similar circumstances associated with recalling the memory will be conflated with the original memory.

Thus, we can have a person (like me) who remembers exactly what they were doing, watching JFK's funeral in 1963: I was on the floor, watching the (color) TV through the legs of my mother's ironing board. I distinctly remember seeing the funeral procession through the city streets. Unfortunately, I was a bit too young (four years old) and our family had no color TV. What I remember and associate with the event is a product of a similar event—President Eisenhower's funeral in 1969 (still no color TV) and watching color documentaries of the same scenes in later years.

Flashbulb memory is certainly worthy of inclusion in fiction, but is often ignored in favor of a related phenomenon call "false memory" (a favorite of mysteries and psychological fiction). The problem of False Memory is highlighted by, but by no means exclusive to, flashbulb memory events. Recent years have seen the legal profession re-evaluating eyewitness testimony when circumstances may result in a false memory being absolutely accepted as true by the witness. For example, if a child experiences strong emotion during "analysis" by a psychiatrist or psychologist, may accept any suggestion by the analyst as a true memory. As with flashbulb memories, it is the emotional input that controls the memory. In a similar manner, PTSD involves memories in which the emotion is so strong, and memory so clear and confident, that recall of the memory invokes a replay of the event so exact, the memory is "consolidated" and rewritten exactly the same, making it even harder to forget or suppress. Nevertheless, research is progressing into how to utilize our knowledge of the physiological mechanisms of memory storage to assist in therapies for individuals suffering from PTSD.

Forget Me Not

Memory and its opposite condition, amnesia, are popular topics in the news, as well as in literature, TV, and movies. "Hard" Science Fiction strives for accuracy, but it is difficult to maintain accuracy in a field that is constantly changing. It is important for both the author and the reader to understand that, as with many research fields, the science is not yet settled, and continues to evolve as research tools improve. On one hand, it is certainly true that we can now re-examine seemingly failed experiments such as the flatworm, in light of new genetics and epigenetics research into "inherited" memories. On the other, diseases and disorders of memory such as Alzheimer's disease still have more unanswered than answered questions, and remain a major topic of medical research and treatment. In addition, there are numerous diseases and disorders of memory, ranging from the effects of drugs, to head injury, to the aftermath of chemotherapy and radiotherapy for cancer. Even with all of the new information at their disposal, scientists are continually learning new facts and mechanisms, and formulating new theories about memory and how it functions. Authors can and should do the same.

Memory shapes our experience. It provides a context for everything we do, and everything we learn. Memory is an important component of our personal sense of "self." It is truly a terrible thing to lose.

Copyright © 2015 Tedd Roberts

Tedd Roberts is the pseudonym of neuroscience researcher Robert E. Hampson, Ph.D., whose cutting edge research includes work on effects of drugs, radiation and disease on memory function. His interest in public education and brain awareness has led him to the goal of writing accurate, yet enjoyable brain science via blogging, short fiction, and nonfiction/science articles for the SF/F community. Tedd Roberts' other nonfiction articles for Baen.com, including the Hugo nominated "Why Science is Never Settled," are available here in Baen.com Free Nonfiction 2012, 2013, 2014, 2015.