Nanotechnology to Enable Future Space Probes

Joseph E. Meany

Introduction

Exploring the solar system and finally ‘meeting the neighbors’ is one of the most hailed achievements of the twentieth century. Getting the chance to see, up close, the other major bodies in our Solar System is a wonder that was left to the imaginations of astronomers even as late as World War I. Carl Sagan famously said in the series Cosmos: “How lucky we are, to live in this time. The first moment in human history where we are, in fact, visiting other worlds.”

Even now, humans continue to break new ground as we send missions to comets, asteroids, and the Solar System’s most famous dwarf planet, Pluto. We’ve come a long way in the interim from the Mariner program, through the Voyager Probes, to the recent missions involving Dawn, New Horizons, and MAVEN.

Each of these crafts, as they had been launched, were equipped with steadily increasing computational power and storage capability reflecting the state-of-the-art technology of the time. As computer components continue to get smaller, they get lighter and cheaper to produce. This means that we can gather and store more data about the solar system in subsequent missions. The technology has matured to the point where private citizens can even launch little satellites of their own into low Earth orbit for experiments! These little vessels are called CubeSats. However, there is a problem with traditional semiconductors as they get smaller and smaller.

As the size of circuits shrinks, the random noise from fluctuations in the circuitry grows. On top of this, there are physical deviations from the traditional materials when quantum physics comes into play on the nanoscale. Nanoscale is defined as one billionth of a meter, or roughly ten-thousand times thinner than the width of a human hair.

Both academic research and industry are focusing right now to address the issues that plague circuits on the nanoscale. Part of this focus includes moving away from silicon as the dominant carrier of electricity to carbon-based molecules. This field of research is called Organic Electronics. Another focus is on the development of new ways to construct the circuits that bypass limitations presented by more traditional manufacturing. Using known properties of atoms, scientists and engineers can use chemistry to coax the atoms to arrange themselves into specific patterns with desirable characteristics.

Current Computing

Gordon Moore predicted in a 1965 paper[1]:

“The complexity for minimum component costs has increased at a rate of roughly a factor of two per year (see graph). Certainly over the short term this rate can be expected to continue, if not to increase. Over the longer term, the rate of increase is a bit more uncertain, although there is no reason to believe it will not remain nearly constant for at least ten years.”

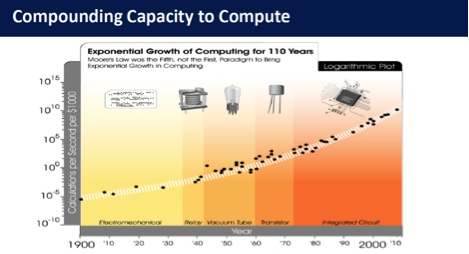

Source: Steve Jurvetson and Ray Kurzweil[2]

Indeed, this prediction has held well beyond the original ten year scale into current technology production. This prediction is named in honor of him, Moore’s Law. The eponymous “law,” has its obvious limits. Components like transistors or diodes shrank over time; the problem is that eventually you get down to the scale of single atoms, and you can’t shrink those any further. Despite the modest time limit that Moore originally set, we have continuously broken past barriers and have created complex machines that fit in our pockets or explore distant planets.

Right now, organic electronics researchers are looking into the possibility of using graphene as the basis for transistors or two-dimensional conducting sheets. Graphene, which is a single carbon atom thick, has loosely bound electrons smeared out across the whole sample. This means that the electrons can easily move about on the surface. Carbon nanotubes (CNTs) are also being looked into for use as miniaturized wires. CNTs are related to graphene much in the same way that a piece of paper can be rolled into a tube. The electrons on nanotubes also move easily across the surface, and so make for excellent wires. In fact, CNTs can conduct electricity almost ten million times better than a copper wire of similar dimensions!

"Graphen" by AlexanderAlUS[3] (left) and a Carbon Nanotube (via phys.org) [4] (right). Each vertex is a single carbon atom.

Besides work in simple conducting materials, research is being done where nanometer wide balls of metal, called quantum dots, are being used to store data magnetically. This could allow us to make better memory for data storage.

Space Probes

As technology has progressed, researchers have been able to put ever more complex and sensitive instruments on board space probes. This, combined with better error checking and shielding, is making leaps and bounds gathering more information about our solar system.

But what kind of characteristics would be necessary for longer missions, making probes interstellar? We already know that the internal electronics need to be protected for a long time from the dangers of cosmic rays. If your wires break apart because they’ve been blasted by radiation, your circuits won’t work. We know that a program would have to be stored for quite a long time while being resistant to degradation. If your code becomes faulty, the probe won’t carry out its instructions. Or, it would carry them out incorrectly. We know we need a power source that won’t decay over time or present a hazard to the other parts of the craft, as a nuclear reactor does.

Working within the constraints of currently known physics, the most useful types of probes would be sent ahead to potentially habitable systems to gather data and scout for resources to be used by humans for when they arrive. Since communicating with Earth is limited to the speed of light, any useful data that the probes might send would be limited by how far away they traveled in the first place. Ultimately it’s dependent on the time taken for the probe to arrive plus the time necessary to send back data once it gets there.

Right now space probes are used only as eyes and ears for us to observe where we are in the universe, but by the time we are ready to really explore for ourselves we will need a machine capable of being much more independent. The probe would have to better analyze its surroundings, actually manipulate matter, even create habitats for the humans coming behind it. To ensure that it could continue exploring, it might even have to be able to make more of itself.

A class of hypothetical machines, designed by John Von Neumann in the 1940’s, has been written about for a long time in science fiction literature. He called these machines, “universal constructors,” but over time they came to bear his name as Von Neumann Machines. The core idea behind a Von Neumann Machine is an automaton which contains enough information and working parts to infinitely self-replicate.

At the current level of technology, true Von Neumann Machines (VNMs) aren’t possible. They require so much information, energy input, and materials that one wouldn’t be able to get off the ground. The information, obviously, comes in the form of memory and programming. Energy would have to come from some sort of power plant, whether it comes from a battery or a series of chemical reactions, like a variation on metabolism. The materials would need to be harvested from the environment and processed into a useful form. This means that some sort of refinery or digestion process is needed.

Our probes are so comparatively simple, nearly devoid of internal awareness, that fixing any issues along the way is either impractical or impossible. Planning for many different contingencies is exactly why modern probes are so tough to engineer, but engineering a VNM would require the ability to subvert all or most possible scenarios. This would be computationally impossible for a single, small craft. We need to find a better way. And I think that’s where taking a page out of the book of living things would come in handy. That idea is popularly called bioinspiration. Because of this, I suggest that a successful probe would not be one discrete automaton but rather a conglomeration of many smaller parts working in sync.

Nanotech in Brief

Nanotechnology can help form the components that make up the core machinery of the probe. As I mentioned above, it is not out of the realm of possibility that a carbon-based computer will eventually be possible. That, combined with the modifications we are making on current semiconductors today would make a system possible that would contain robots-within-robots, automatons whose very specific tasks are to ensure the survival and success of the whole.

Nanomachines have been made, experimentally, at the lab of Professor James Tour in 2010. He and his team were able to demonstrate that little “cars” made from carbon with wheels of fullerene (spherical carbon cages) will roll across a metal surface just like a car on a road. Last year, at the University of Texas at Austin, Professor Donglei Fan and her group were able to make a nanoscale motor- one that rotated when energy was applied, and was smaller than human blood cells.

Creating a nanoscale machine is no easy task. If you look closely at a glass of water that has little dust motes floating in it, they move around seemingly randomly. Part of this is due to convection currents in the water, and part of it is due to the random movements of the molecules jostling each other around. This random movement is called Brownian Motion. It is like thinking about assembling a string of connected billiard balls, then using that string to move other balls around as they’re rapidly moving around the table. It’s pretty chaotic down on the atomic scale. Overcoming Brownian Motion reliably and efficiently is the biggest engineering hurdle for any nanomachine, so tests would need to demonstrate a lot of reproducible results to be considered valid.

Recent popular science articles in the media have focused on Moore’s law. They have even found themselves adapted to explaining phenomena (like business models) unrelated to computing. Intel has stated that it will no longer use silicon after transistors reach a 7nm size. At that point, transistors are only roughly 40 silicon atoms wide! You can’t ignore quantum effects at that level and typical manufacturing techniques just get much too expensive to be profitable. This is where the work on graphene and CNT circuitry will really shine, as these both work reliably around 1nm in diameter. Organic circuitry might be the “metal” of the future.

The normal-scale bodies we encounter every day are actually run on and maintained by atomic-scale events. A probe that we send to the far reaches of the galaxy could not rely on a single source of power. Just as we humans do not make extended cross-country drives with just a ham sandwich, we need to devise a way for a probe to make its own energy in the interstellar medium. That answer will probably come from a better understanding of what exactly is within the space between the stars. The craft would need to take into account the fact that whatever energy it creates for acceleration, it will also have to create again for deceleration. Just as pit stops and gas stations along a route help keep a traveler gassed and fed, a probe would need to be able to detect and refuel at comets, asteroids, or other small bodies. Specialized chemical catalysts or quantum devices could help turn these raw materials into “food” and then subsequently, energy.

Researchers have found a way to store and read data as an archive by using DNA and a new way of deciphering the data. Regular computers use binary coding to operate, but the new way that George Church, Yuan Gao, and Sriram Kosuri developed in 2012 actually uses the trinary system. Instead of binary, with base two using 1 and 0, trinary uses 0, 1 and 2 (or off, low, and high if you like).

The stability and lifetime of DNA sequences is important to compete against regular magnetic tapes or hard drives. Wooly mammoth DNA from 60,000 years ago gives us an initial stability benchmark. It has been preserved in just the right way on earth all that time, and was readable by sequencers. A study of DNA degradation in space has not been done yet and is a necessary question to answer. The storage medium would need to be well protected from cosmic radiation to prevent errors, and the decoding methods would need to be much more accurate than they are now.

Information density in the organic molecules is tremendous-- a full 1 million times more dense than in regular hard drives. This might give us the ‘brain’ that we’re looking for in the probe. Organic molecules are much lighter than metal and traditional magnetic drives materials, which could help reduce the overall weight of the probe and therefore the energy required to accelerate/decelerate it. Organic molecules do tend to have a lower absorption of neutrons and heavy cosmic rays compared to metallic elements, but are more prone to permanent failure should a break occur. This is where redundancy would absolutely be necessary.

Nanotech Addressing the Challenges

So we have a hybrid system of magnetic quick-access RAM-like memory mixed with the longer term storage of the DNA-based memory would help to create an efficient system to carry the mission program to the stars, while saving space and mass. A power system designed to harvest material from the environment and break it down for fuel. And electronic muscles made from a mixture of carbon and metal containing structures.

All of this put together as a larger body comprised of smaller individual parts that distribute work. Instructions built into specialized nanometer and micrometer sized bots would make a system possible where it could function under a variety of situations. Imagine if you had to actively devote mental energy to healing every bruise or cut. Or if you had to actively think about pumping blood through your veins. You wouldn’t get a whole lot done in the day! Our bodies have specialized parts built into them that have been refined over the eons. They have evolved to act on the principles of chemistry from the smallest molecule-and-enzyme reaction to governing our oxygen levels and internal temperature.

Eventually, we will have the ability to create machines that will be able to make decisions for themselves. They will assess their internal and external environments, then make decisions based on those readings. This complexity of artificial intelligence combined with a system that acts with tiny machines all in sync looks eerily familiar. And it looks like Life.

[1] Moor,G. Proceedings of the IEEE, Vol. 86, No. 1, January 1998 http://www.cs.utexas.edu/~fussell/courses/cs352h/papers/moore.pdf

[2] http://digitalcommons.usu.edu/cgi/viewcontent.cgi?article=3164&context=smallsat

[3] "Graphen" by AlexanderAlUS - Own work. Licensed under CC BY-SA 3.0 via Wikimedia Commons

http://commons.wikimedia.org/wiki/File:Graphen.jpg#mediaviewer/File:Graphen.jpg

[4] Researchers uncover recipe for controlling carbon nanotubes Oct 14, 2009 http://phys.org/news174752422.html

Copyright © 2015 Joseph E. Meany

Joseph Meany, originally from Keene, NH, is a PhD Candidate in Chemistry at The University of Alabama. His research focuses on the design of molecules for nanomaterials in tiny electronic circuits. Space exploration and science fiction were Joseph's inspiration to become a scientist. In his spare time, he enjoys beer brewing and attending SF conventions.